Yaxis label= "&label" values= ( 75 to 82 ) grid Vline t / response=y stat= mean limitstat= &limitstat markers Title "VLINE Statement: LIMITSTAT = &limitstat" %macro PlotMeanAndVariation (limitstat=, label= ) The following statements create the three line plots with error bars: Several interpretations use the 68-95-99.7 rule for normally distributed data.

#Plot mean and standard deviation excel how to

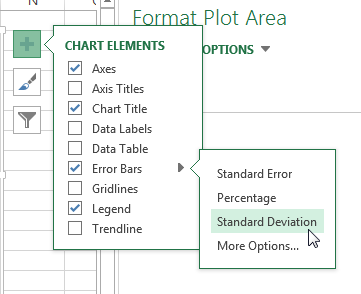

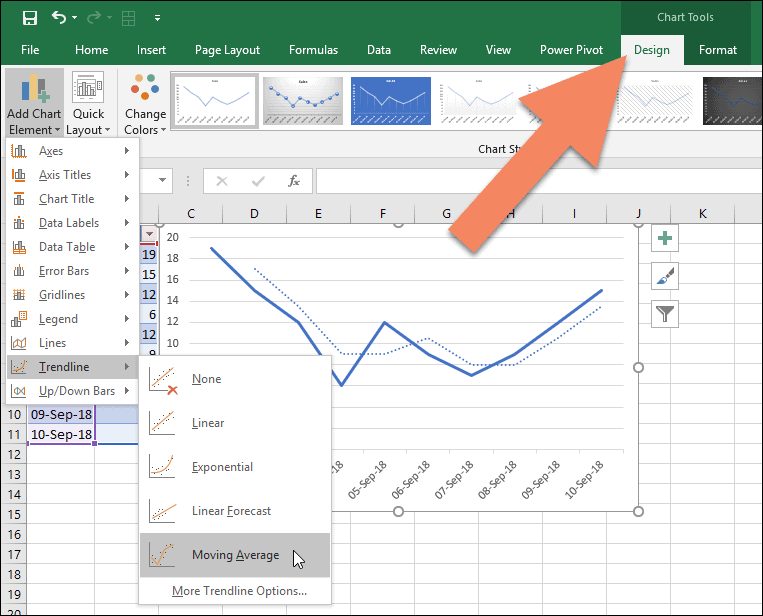

Let's plot all three options for the error bars on the same scale, then discuss how to interpret each graph. Visualize and interpret the choices of error bars The SD, SEM, and CLMWidth columns are the lengths of the error bars when you use the STDDEV, STDERR, and CLM options (respectively) to the LIMITSTAT= option on the VLINE statement in PROC SGPLOT. (The multiplier depends on N For these data, it ranges from 2.03 to 2.06.)Īs shown in the next section, the values in The CLMWidth value is a little more than twice the SEM value. The table shows the standard deviation (SD) and the sample size (N) for each time point. Output out=MeanOut N= N stderr=SEM stddev=SD lclm=LCLM uclm=UCLM ĬLMWidth = (UCLM-LCLM )/ 2 /* half-width of CLM interval */ run * Optional: Compute SD, SEM, and half-width of CLM (not needed for plotting) */ proc means data=Sim noprint You can use PROC MEANS and a short DATA step to display the relevant statistics that show how these three statistics are related: Then the multiplier is a quantile of the t distribution with N-1 degrees of freedom, often denoted by t* 1-α/2, N-1. In general, suppose the significance level is α and you are interested in 100(1-α)% confidence limits. For large samples, the multiple for a 95% confidence interval is approximately 1.96.

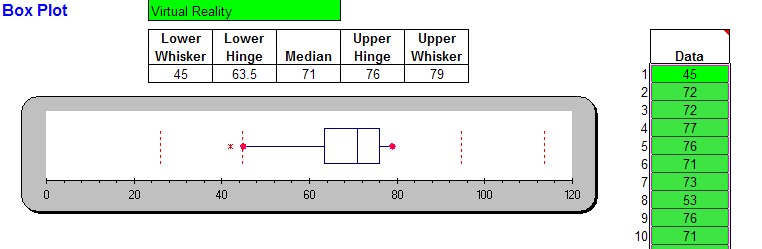

That is, the standard error of the mean is the standard deviation divided by the square root of the sample size. The SEM and width of the CLM are multiples of the standard deviation, where the multiplier depends on the sample size: These statistics are all based on the sample standard deviation (SD). Relationships between sample standard deviation, SEM, and CLMīefore I show how to plot and interpret the various error bars, I want to review the relationships between the sample standard deviation, the standard error of the mean (SEM), and the (half) width of the confidence interval for the mean (CLM). But what statistic should you use for the heights of the error bars? What is the best way to show the variation in the response variable? A simpler display is a plot of the mean for each time point and error bars that indicate the variation in the data. A line connects the means of the responses at each time point.Ī box plot might not be appropriate if your audience is not statistically savvy. The boxes use the interquartile range and whiskers to indicate the spread of the data.

The box plot shows the schematic distribution of the data at each time point. Y = rand ( "Normal", mu, sigma ) /* Y ~ N(mu, sigma) */ output The function requires the arithmetic mean and standard deviation values from the user.Array mu _temporary_ ( 80 78 78 79 ) /* mean */ array sigma _temporary_ ( 1 2 2 3 ) /* std dev */ array N _temporary_ ( 36 32 28 25 ) /* sample size */ call streaminit ( 12345 )

Normalizing data in ExcelĮxcel has a function called STANDARDIZE which calculates and returns the normalized value from a distribution characterized by arithmetic mean and standard deviation. For example, +1 means that a particular value is one standard deviation above the mean, and -1 means the opposite. In a normalized data set, the positive values represent values above the mean, and the negative values represent values below the mean. Thus, transformed data refers to a standard distribution with a mean of 0 and a variance of 1. Standardization is the process of transforming data based on the mean and standard deviation for the whole set. Normalization in this case essentially means standardization. In this article, we are going to show you how to normalize data in Excel. This is especially true for when comparing various sets of data. Finding the z-scores of a sample data based on the standard deviation and mean of the entire data set can help you achieve a more manageable workload. Normalizing (or standardizing) is an essential step in analyzing large datasets.